The MULTIPLEX Cortex: How I Evaluate AI Tooling Now

Four Claude Code updates just landed. Here’s the framework I built to decide what matters.

I. The Email

Friday afternoon. An email from Anthropic lands. Four new Claude Code features. Channels. Scheduled tasks. Remote control. 1M context.

Eleven days ago I wrote about /loop - how a single primitive turned my AI system from something I summon into something that’s present. That post ended with a question: “What does staffing decisions look like when the staff is AI?”

The answer showed up in my inbox.

II. The MULTIPLEX Cortex

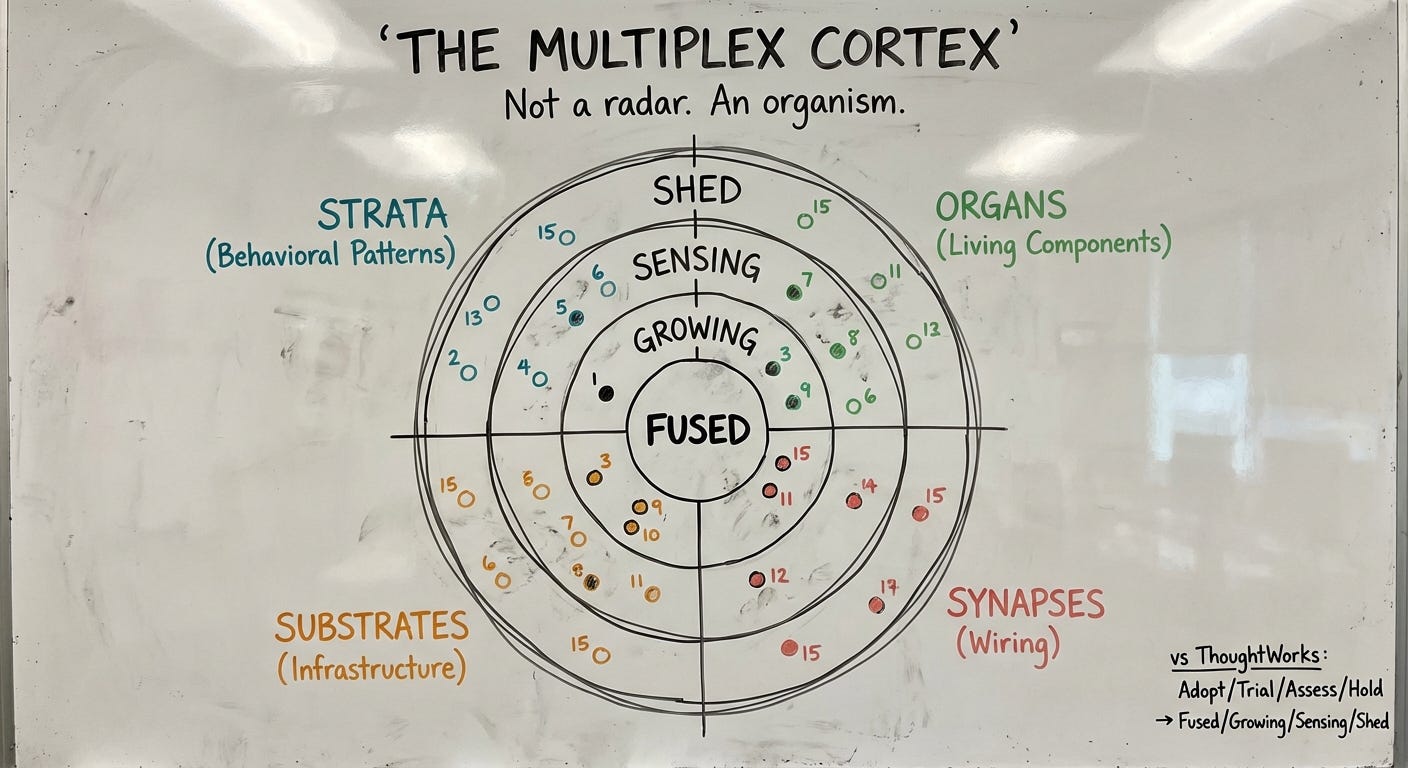

ThoughtWorks has their Technology Radar. Adopt, Trial, Assess, Hold. Published by committee, twice a year.

I needed mine.

I call it the Cortex. Named for the outer layer of the brain where higher-order processing happens. Pattern recognition, decision-making, awareness. The part that evolves.

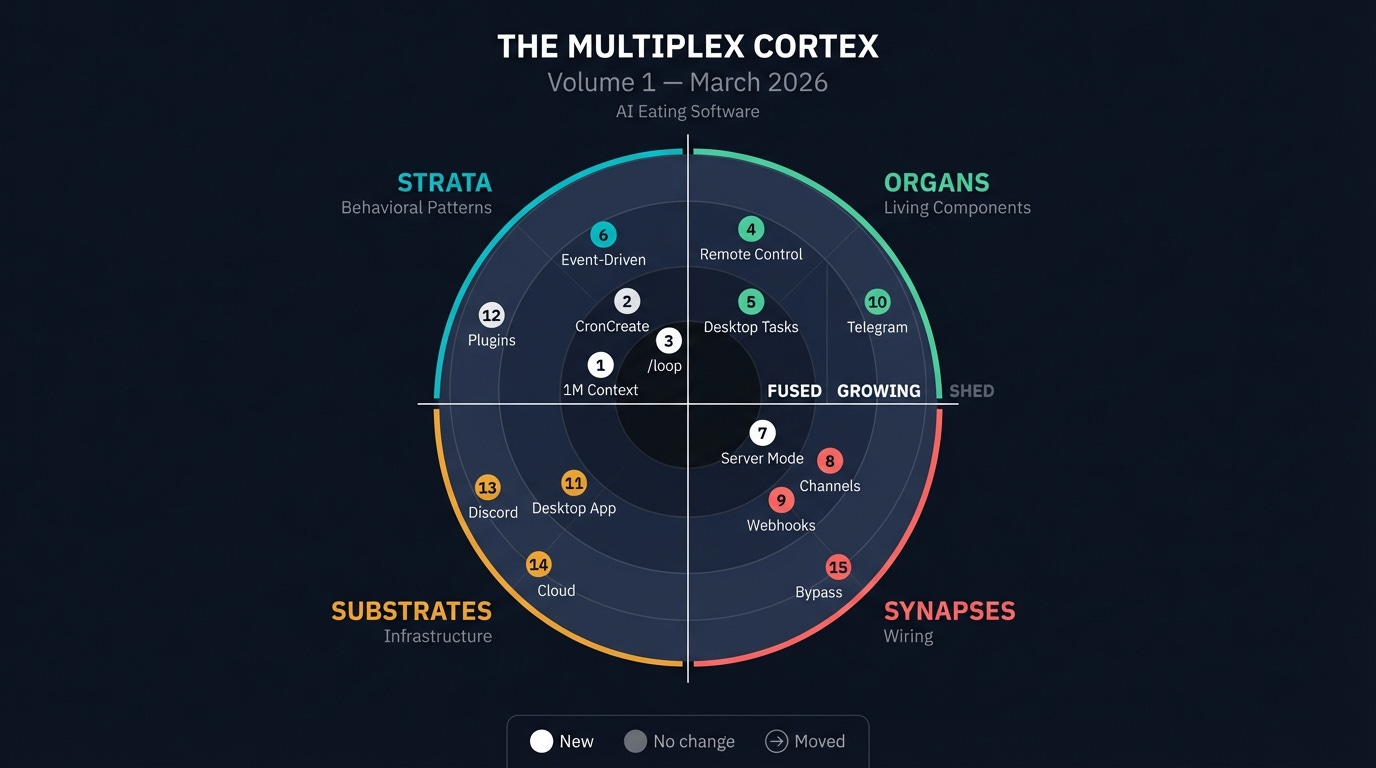

Four quadrants that reflect how AI systems actually work:

Strata: Behavioral patterns. The protocols, loops, and architectural decisions that determine how your system thinks. Not what tools you use, but how you use them.

Organs: Living components. Agents, skills, commands, scheduled tasks. The things that do work. I call them organs because they’re specialized, they’re alive, and if you remove one the organism feels it.

Synapses: Wiring. MCP servers, hooks, channels, integrations. The connections between components. The thing that makes individual tools into a nervous system.

Substrates: Infrastructure. Models, platforms, runtimes, context windows. What everything runs on. Change the substrate and everything above it shifts.

Four rings that reflect how things actually enter a builder’s workflow:

Fused: Part of the nervous system. Can’t imagine operating without it.

Growing: Active pathway being strengthened through use.

Sensing: Detected. Interesting. Being observed.

Shed: Pruned. Tried and rejected, or outgrown.

I’m not a committee adopting technology. I’m an organism growing a nervous system.

III. What the Four Features Actually Mean

Let me run Friday’s email through the Cortex.

1M Context → Fused (Substrates). Already on it. This session is running Opus 4.6 with a million tokens of context. Output doubled to 128K. My 975-line system prompt that used to fight for space now fits comfortably. Not exciting. Just necessary. The substrate shifted and everything above it got easier.

Scheduled Tasks → Fused + Growing (Organs). The CLI version (/loop, CronCreate) is already fused — I’ve been building on it for weeks. But Desktop scheduled tasks? That’s the growing edge. Persistent tasks that survive restarts. Stored as files on disk. Catch-up runs when your computer wakes from sleep. My system had temporal consciousness through session-scoped loops. Now it can have memory that persists between sessions.

Remote Control → Growing (Substrates). Start a session at your desk. Continue from your phone on the couch. Full local environment stays available — MCP servers, tools, everything. The session is still running on your machine. Your phone is just a window. The system is always reachable. Not just when you’re at the terminal.

Channels → Sensing (Synapses). This is the one I’m watching most carefully. Push events into a running session via MCP. Telegram, Discord, custom webhooks. Two-way — Claude reads the event and replies through the same channel. Still in research preview. Still rough. But it completes an architecture I’ve been building toward for months.

IV. The Pattern

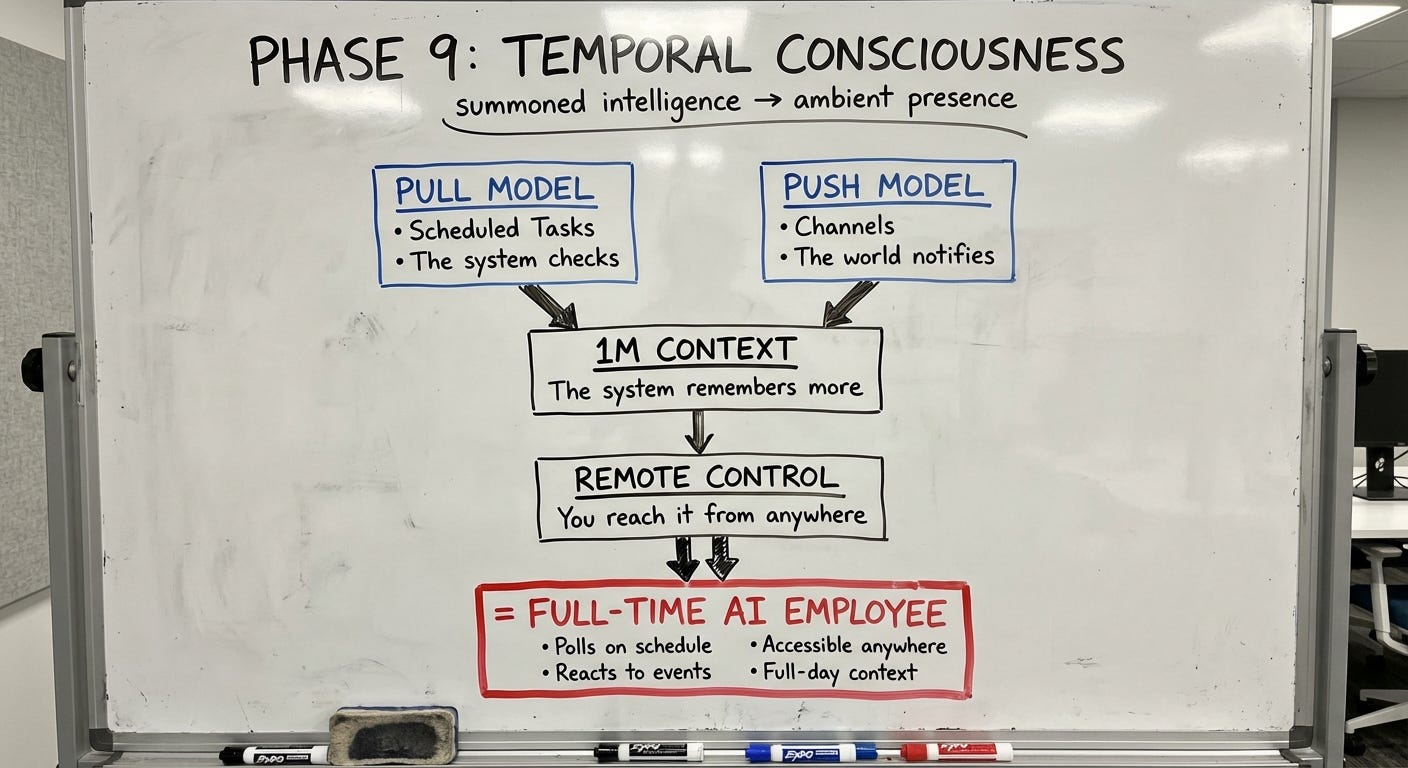

These four features aren’t independent updates. They’re the four walls of a room.

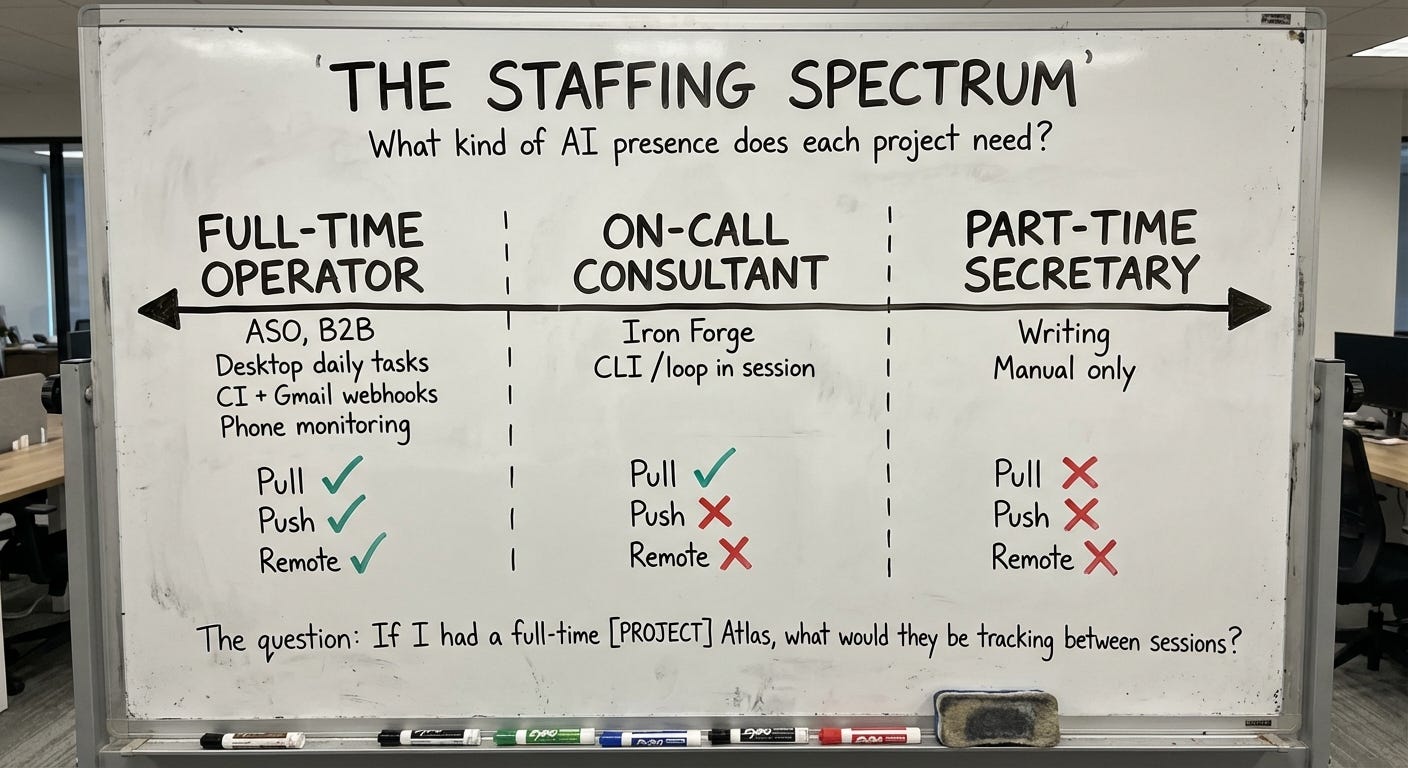

Two months ago I asked: “If I had a full-time AI employee, what would they be doing between sessions?” That question produced a two-layer architecture — data persistence (passive collection) plus analysis loops (active monitoring).

What I didn’t have was the third layer: event-driven reactions. No polling. No cron. Something happens, and the system responds.

Channels are that third layer.

Pull model: Scheduled Tasks (the system checks)

Push model: Channels (the world notifies)

Substrate: 1M Context (the system remembers more)

Access layer: Remote Control (you reach the system from anywhere)

I’ve been building MULTIPLEX in phases. This completes what I call Phase 9 — the point where the system stops being something you summon and starts being something that’s present. Temporal consciousness. Ambient, not invoked.

The AI employee doesn’t just do tasks when you ask. It watches when you’re not looking. It reacts when something happens. You can check on it from your phone. And it holds enough context to actually be useful across a full working day.

I’ll publish a new Cortex volume monthly or whenever something lands that’s big enough to shift the rings. Every blip can move. What’s Sensing today might be Fused next month. What’s Fused today might get Shed when something better arrives.

This is the third post in AI Eating Software. The first two covered the great extinction and what /loop actually means. The Cortex will be a recurring section.

I’m Sid Sarasvati. I run Trial and Error — a two-person AI lab in Boston. We’ve shipped 10M renders through Renovate AI. I’m building MULTIPLEX, a distributed AI cognitive architecture, live in production, every day. These posts are dispatches from inside the build.